awaithumans

Health Warn

- License — License: Apache-2.0

- Description — Repository has a description

- Active repo — Last push 0 days ago

- Low visibility — Only 6 GitHub stars

Code Warn

- process.env — Environment variable access in examples/email-end-to-end/send.ts

- network request — Outbound network request in examples/email-end-to-end/send.ts

- network request — Outbound network request in examples/email-smoke/smoke.ts

Permissions Pass

- Permissions — No dangerous permissions requested

No AI report is available for this listing yet.

The human layer for AI agents. Your agents already await promises. Now they can await humans.

awaithumans — HITL infrastructure for AI agents

Your agents already await promises. Now they can await humans.

Docs · Quickstart · Examples · Discord

HITL infrastructure for AI agents — open source. A single primitive (await_human() / awaitHuman()) your agent calls when it needs a human. A real person reviews via Slack / email / a built-in dashboard, submits a typed response, and your agent resumes — like awaiting any other promise.

from awaithumans import await_human

from pydantic import BaseModel

class Decision(BaseModel):

approved: bool

note: str | None = None

decision = await await_human(

task="Approve refund request",

payload_schema=RefundRequest,

payload=RefundRequest(order_id="A-4721", amount_usd=180),

response_schema=Decision,

timeout_seconds=900,

)

if decision.approved:

process_refund(...)

The agent waits on decision like it waits on any other Promise or Future.

A human gets notified (Slack, email, dashboard), reviews the request, and

submits a typed response. The agent resumes with the typed answer.

The problem

Every production agent hits a wall where the model can't or shouldn't

proceed alone. Three distinct walls, each permanent:

- Judgment. The agent has the information but can't be trusted to

decide. High liability, regulation, or consequence — KYC approvals,

refund sign-offs, content moderation escalations. A human carries the

accountability. - System-uncertainty. The agent doesn't know the state of the world.

The source of truth is in a bank dashboard, a partner system, a

manual file. No model can close this gap. A human investigates and

tells the agent what's true. - Embodiment. The task requires a real person — signing, calling,

picking something up, passing a CAPTCHA. Not a model problem.

Better models don't solve walls 2 and 3. awaithumans makes the call

to a human a first-class primitive instead of a pile of bespoke glue

per project.

Why awaithumans

| awaithumans | humanlayer | DIY glue code | |

|---|---|---|---|

| Maintained | ✅ Active development | ❌ Abandoned | — |

| Setup time | One command (awaithumans dev) |

Per-customer rebuild | Weeks |

| Channels | Slack + email + built-in dashboard | Slack only | Build each yourself |

| Typed responses | ✅ Pydantic (Python) / Zod (TS) — schema-validated end to end | Partial | Build each yourself |

| Restart-safe | ✅ Stripe-style idempotency — agent resumes across restarts | ❌ | Build each yourself |

| AI pre-verification | ✅ Claude / OpenAI / Gemini / Azure — pre-check the human's answer before the agent trusts it | ❌ | Build each yourself |

| Workflow engines | ✅ Temporal + LangGraph adapters — hand the wait to the engine | ❌ | Build each yourself |

| Self-hostable | ✅ Docker + Postgres in one command | SaaS-only | — |

| License | Apache 2.0 (patent grant) | — | — |

Built by engineers who hit the HITL wall three times in production fintech and ScaleBrick agent systems — and watched the only OSS alternative get abandoned by its founder over per-customer-fork creep. The architecture has exactly four extension points (channels, verifiers, routers, task-type handlers) so no single customer can push the core into the same trap.

Quick start

60 seconds. Two terminals. First-time setup needs a browser click; everything else is paste-and-run.

Terminal 1 — server + dashboard:

pip install "awaithumans[server]" && awaithumans dev

Click the setup URL it prints, create your operator account. The dashboard is now at http://localhost:3001.

Terminal 2 — paste this whole block:

pip install awaithumans pydantic && cat > /tmp/refund.py <<'PY'

from awaithumans import await_human_sync

from pydantic import BaseModel

class RefundRequest(BaseModel):

order_id: str

amount_usd: float

class Decision(BaseModel):

approved: bool

d = await_human_sync(

task="Approve refund of $180?",

payload_schema=RefundRequest,

payload=RefundRequest(order_id="A-4721", amount_usd=180),

response_schema=Decision,

timeout_seconds=300,

)

print("approved" if d.approved else "rejected")

PY

python /tmp/refund.py

The script blocks. Open the dashboard, click the pending task, hit Approve. The script unblocks with the typed Decision.

That's the full loop. From here, swap the schema for your own, add notify=["slack:#ops", "email:[email protected]"] to route the task elsewhere, or wrap the call in a Temporal / LangGraph workflow.

More examples — refund, KYC, content moderation, Slack-native, Temporal, LangGraph — in examples/.

What you can build with it

Real production patterns this primitive collapses into a single function call:

- High-value approvals — refunds above a threshold, wire transfers, plan upgrades, contract renewals. Agent prepares the case (Pydantic payload), human signs off with a typed decision (approved + reason).

- KYC / identity review — agent flags borderline documents, human inspects, sends back

verified: boolwith notes. Pair withverifier=verify_with_claude(...)to pre-check the reviewer's reasoning. - Content moderation escalation — AI tags a borderline post; instead of hard-deciding, it calls

await_human()with the content + AI's reasoning + a Switch for keep/remove. Reviewer's decision flows back into the moderation pipeline. - Agent-generated code review — your LLM drafts a pull request; before merge, the agent waits for a human to approve via Slack. The "Claim this task" button assigns it to whoever's on rotation.

- Customer-success escalation — support agent answers FAQs; on a complex thread, it escalates to a human with the full transcript as the payload. Human writes the reply, agent posts it.

- Scrape-and-CAPTCHA fallback — automation hits a CAPTCHA wall, calls

await_human()with the screenshot, a human solves it, agent resumes the scrape.

Anything where an LLM's confidence is too low, the liability too high, or the source of truth lives outside the model's reach — it's HITL-shaped, and this primitive fits.

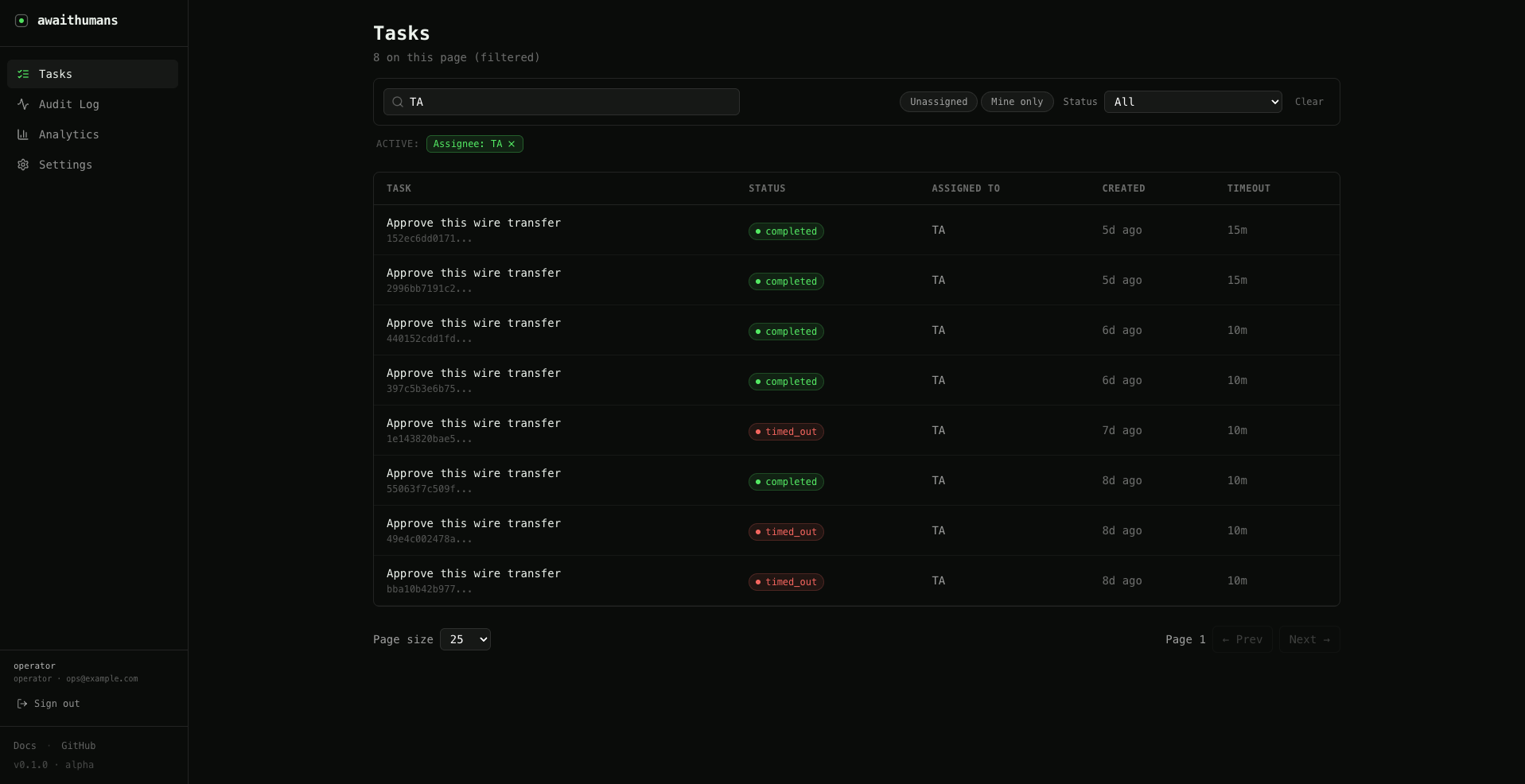

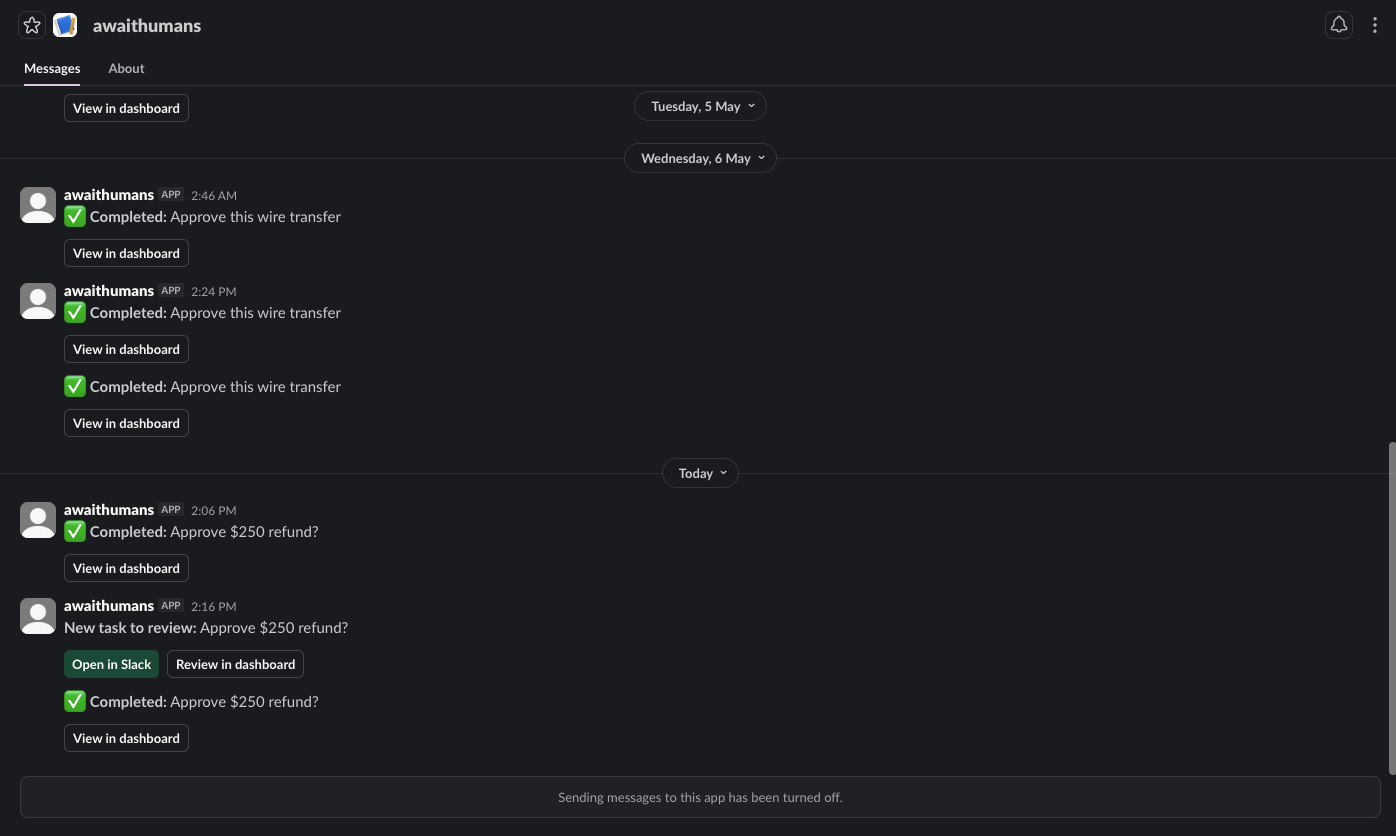

Complete tasks in Slack

Add notify=["slack:#ops"] and the task lands in the channel with a

"Claim this task" button. First clicker atomically wins; their

response form opens as a modal. Completing it unblocks the agent

just like the dashboard path.

decision = await await_human(

task="Approve refund",

payload_schema=RefundRequest,

payload=RefundRequest(order_id="A-4721", amount_usd=250),

response_schema=Decision,

timeout_seconds=900,

notify=["slack:#ops"],

)

Works for direct messages too (slack:@U01ABC234) and email

(email:[email protected]).

Durable mode

When your agent runs inside a workflow engine, you don't want a

long-poll hanging on the orchestrator's thread for 15 minutes. The

Temporal and LangGraph adapters hand the wait to the engine itself:

Temporal — signal-based suspend + callback:

from awaithumans.adapters.temporal import await_human_temporal

decision = await await_human_temporal(

task="Approve refund",

payload_schema=RefundRequest,

payload=...,

response_schema=Decision,

timeout_seconds=900,

)

LangGraph — interrupt/resume:

from awaithumans.adapters.langgraph import await_human_langgraph

Same await_human shape, same typed response. The adapter just

changes how the wait is orchestrated.

AI verification

Ask an AI to pre-check the human's work before the agent trusts it.

Catches the "human clicked approve without reading" case:

from awaithumans.verifiers.claude import verify_with_claude

decision = await await_human(

task="Approve refund",

payload_schema=RefundRequest,

payload=...,

response_schema=Decision,

verifier=verify_with_claude(

instructions="Reject if the note contradicts the approval.",

max_attempts=2,

),

)

The verifier runs after each human submission. If it fails, the task

is re-sent to the human with the verifier's reason attached.

TypeScript

npm install awaithumans

import { awaitHuman } from "awaithumans";

import { z } from "zod";

const RefundRequest = z.object({

orderId: z.string(),

amountUsd: z.number(),

});

const Decision = z.object({

approved: z.boolean(),

});

const decision = await awaitHuman({

task: "Approve refund",

payloadSchema: RefundRequest,

payload: { orderId: "A-4721", amountUsd: 180 },

responseSchema: Decision,

timeoutMs: 900_000,

});

Full walkthrough: examples/quickstart-ts/.

The server + dashboard are Python — TypeScript runs npx awaithumans dev (via uv) so you never touch a Python env.

Self-hosted

docker run -p 3001:3001 ghcr.io/awaithumans/awaithumans:latest

Or docker compose up with the included docker-compose.yml

(optional Postgres block inside). Backs everything — API, dashboard,

channels — from one image.

Architecture

- Core primitive: one function,

await_human()/awaitHuman(),

typed-in-typed-out. - Task store: SQLite in dev, Postgres in prod. Idempotency keys,

atomic state transitions, audit trail. - Channels: Slack + email today. Plug in your own by implementing

a small interface (server/channels/). - Verifiers: Claude today, OpenAI, Gemini, and Azure OpenAI shipped; any provider is a one-file adapter.

- Router: least-recently-assigned over a user directory with

free-formrole/access_level/poollabels. - Task-type handlers: forms auto-generated from your Pydantic /

Zod schema, rendered per channel (Slack Block Kit, email, web form).

Every customization flows through one of these four buckets. That's

the entire extension surface.

Documentation

- Quickstart:

examples/quickstart/ - Full docs: docs.awaithumans.dev

- Contributing:

CONTRIBUTING.md - Security policy:

SECURITY.md

Packages

| Package | Registry | License |

|---|---|---|

awaithumans (Python SDK + server + CLI + dashboard) |

PyPI | Apache 2.0 |

awaithumans (TypeScript SDK) |

npm | Apache 2.0 |

ghcr.io/awaithumans/awaithumans (container) |

GHCR | Apache 2.0 |

License: Apache License 2.0. Permissive, OSI-approved,

with an explicit patent grant. Use it in proprietary stacks, fork it,

ship it inside paid products — no fee, no contact required. The only

thing the license asks is that you preserve the notice and don't use

the project's trademarks without permission.

Status

v0.1.0 — public preview. Released 2026-05-11.

The full primitive, all three channels (dashboard / Slack / email), both durable adapters (Temporal / LangGraph), AI verification across four providers, and one-command self-hosting are all live in this release.

This is a young project — APIs are stable for v0.x, but expect rough edges in the long tail. File issues, open PRs, drop questions in Discussions or Discord. Every reproducible bug report shipped with a fix in v0.2.

For the post-launch roadmap — local task book for runtimes without an orchestrator, custom router strategies, post-launch hardening — see Roadmap & help wanted.

Reviews (0)

Sign in to leave a review.

Leave a reviewNo results found